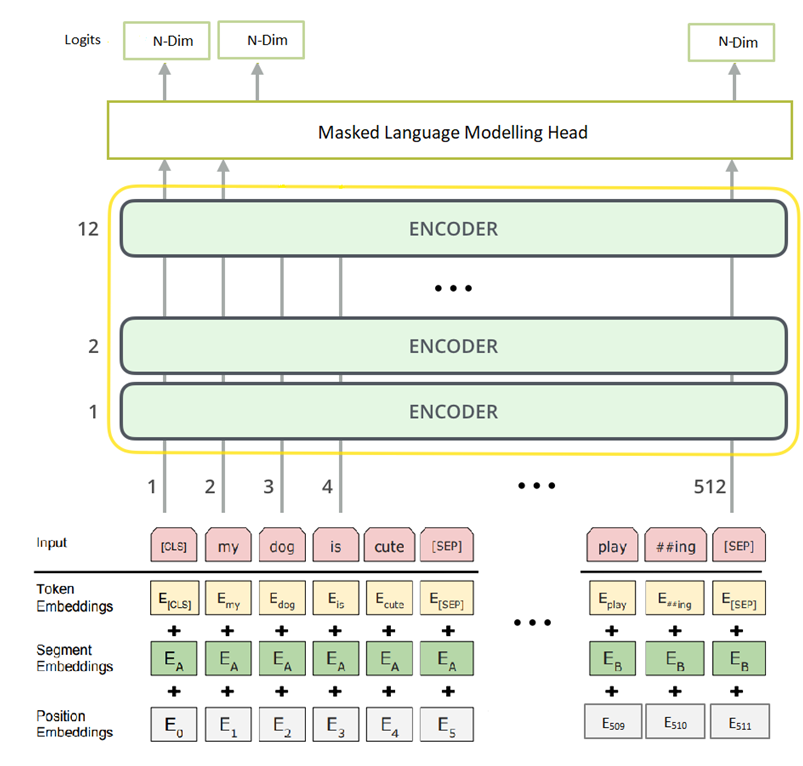

Researchers From China Propose A New Pre-trained Language Model Called 'PERT' For Natural Language Understanding (NLU) - MarkTechPost

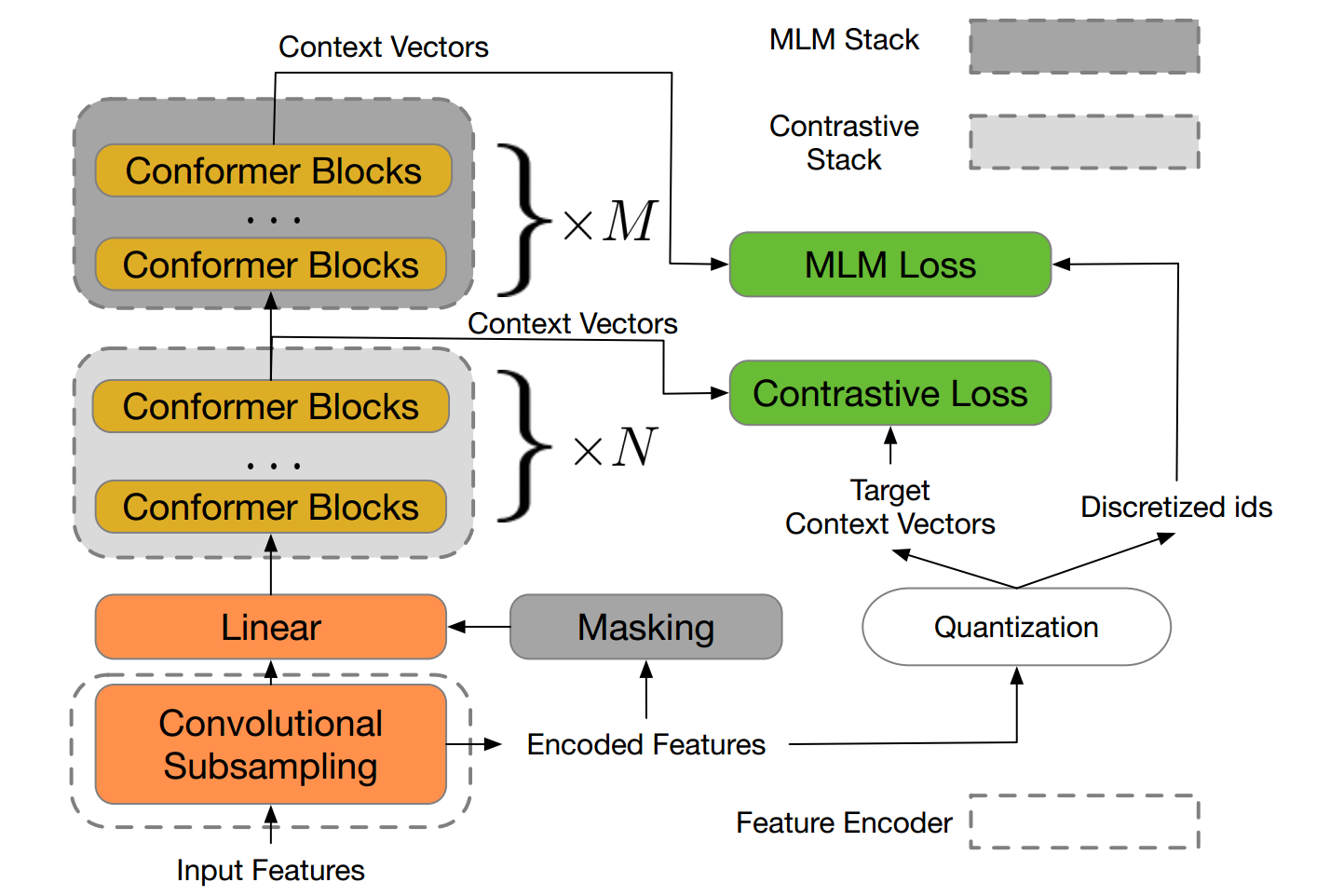

W2V-BERT: Combining contrastive learning and masked language modeling for self-supervised speech pre-training

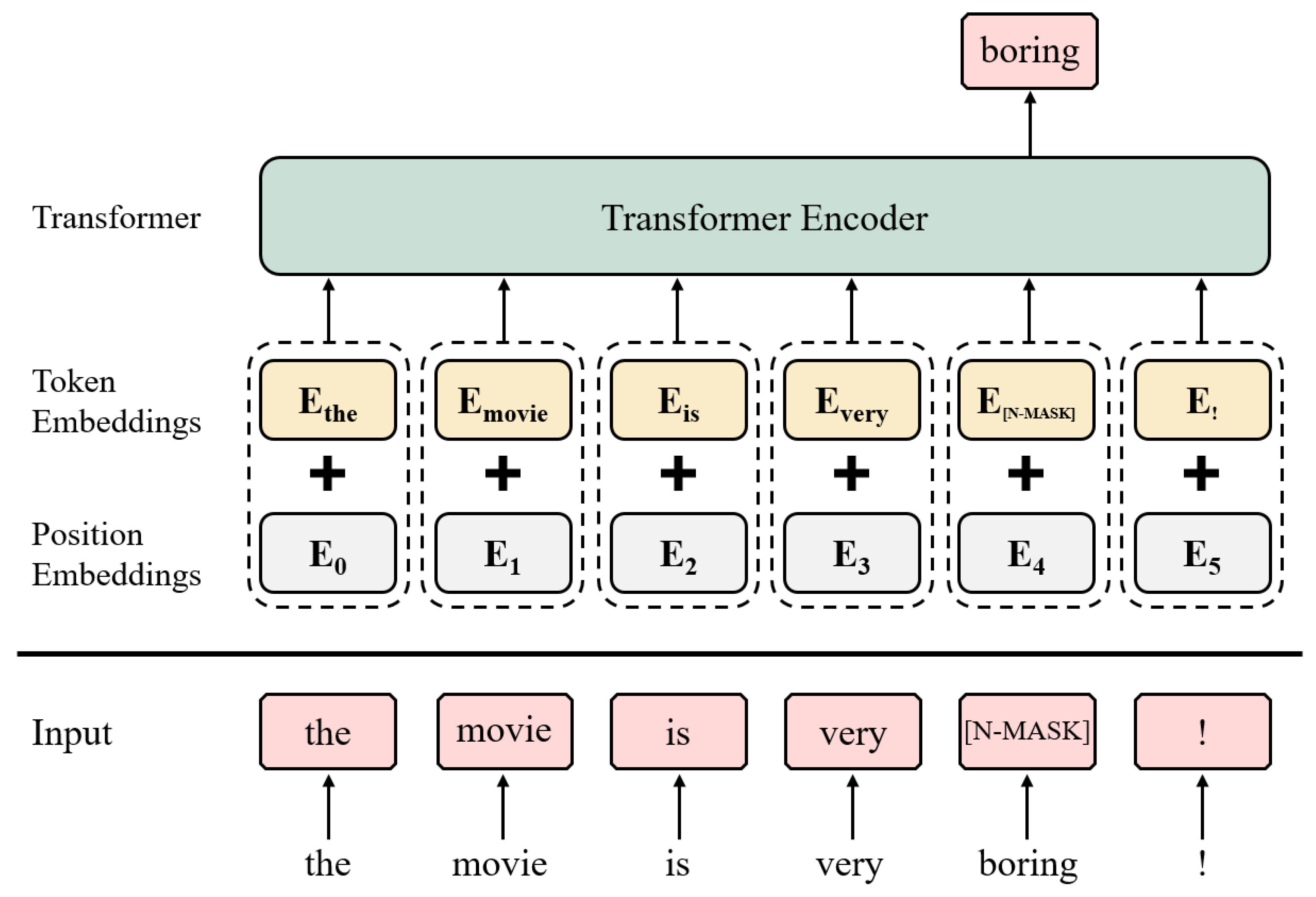

RoBERTa—masked language modeling with the input sentence: The cat is... | Download Scientific Diagram

![PDF] What the [MASK]? Making Sense of Language-Specific BERT Models | Semantic Scholar PDF] What the [MASK]? Making Sense of Language-Specific BERT Models | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/6551f742b825561d26242ca8a646ba0e33fb109f/3-Figure1-1.png)